🤖 Summarize this article with AI:

💬 ChatGPT 🔍 Perplexity 💥 Claude 🐦 Grok 🔮 Google AI Mode

Rainforest QA blends no-code automation with crowdsourced human testing. For teams without in-house QA, that hybrid model sounds appealing on paper.

But most teams searching for Rainforest alternatives aren’t dissatisfied with “no-code” testing in principle. They’re hitting a specific wall:

- Crowd-based execution introduces variability — a human tester’s interpretation of a test step isn’t the same thing twice.

- Pricing scales unpredictably with usage and crowd involvement.

- Step-by-step test creation is slower than background recording.

- No local execution — everything runs on virtual machines in the cloud, adding latency and cost.

- Limited debugging control when tests fail — while Rainforest QA provides detailed test results with browser logs and generative AI explanations, some users find these insufficient for deep debugging.

While Rainforest QA offers features like generative AI to auto-update tests, parallel execution, and detailed test results, these may not fully address the needs for rapid, local, or deeply customizable debugging.

If your goal is deterministic, repeatable web automation at a predictable cost, there are better-fit tools. Here’s the honest shortlist.

🎯 Best Rainforest QA Alternatives — Shortlist

Here is a shortlist of the best Rainforest QA alternatives - divided by team type.

BugBug – Startups and SaaS teams wanting self-managed codeless web testing with local execution, a free plan, and flat pricing.

TestSigma – Non-technical teams needing AI-powered coverage across web, mobile, and API in a single platform.

Autify – Agile teams wanting unified no-code web and mobile automation with solid CI/CD integration and no crowd-testing model.

LambdaTest – Teams that already have test scripts and need broad cross-browser cloud execution across 2,000+ browser and OS combinations.

Mabl – Enterprise DevOps teams running continuous testing at scale who need AI-driven test maintenance and deep pipeline integration.

QA Wolf – Teams that want fully managed automated browser testing with a zero-flake guarantee — without building in-house automation capability.

Testim – Enterprise web teams managing large UI test suites where frequent interface changes make AI self-healing worth the cost.

Global App Testing – Teams that want the human testing model with faster turnaround and broader device and geography coverage than Rainforest provides.

Sauce Labs – Enterprise teams with existing test scripts needing comprehensive cross-browser execution infrastructure, analytics, and error monitoring.

Virtuoso QA – Non-technical teams that prefer writing tests in natural language and want a more actively developed platform than Rainforest QA.

Cypress / Playwright – Developer-led teams wanting full open-source framework control with their own execution infrastructure.

Selenium – Teams with existing multi-language automation ecosystems needing maximum cross-browser flexibility and a mature open-source community.

Looking for a self-managed alternative that gets your first test running in under 10 minutes?

Test easier than ever with BugBug test recorder. Faster than coding. Free forever.

Get started

Check also:

What Is Rainforest QA? Why Do Teams Look for an Alternative?

Rainforest QA is a no code platform designed to simplify the test automation process for web and mobile applications. It combines codeless test automation with on-demand crowdsourced human testers, making it accessible for teams that want to validate web and mobile applications without building a QA team from scratch. Testers from a global crowd execute test cases against real browsers and devices, providing a hybrid of human judgment and automated consistency.

Rainforest QA enables test creation and maintenance in plain English, but some users experience test brittleness and challenges with maintaining tests as applications evolve.

Best for:

- Product teams with zero in-house QA who want fast web validation

- Teams that value human insight on edge cases alongside automation

- Organizations comfortable with usage-based pricing and cloud-only execution

Rainforest’s crowdsourced model is its biggest differentiator — and its biggest trade-off. Human testers can catch what automation misses. But they also introduce inconsistency, slower feedback cycles, and pricing that scales with test volume rather than team size.

Teams typically leave Rainforest when:

- They want fast, automated feedback on every deploy without waiting for human testers

- Costs become unpredictable as test suites grow

- They need step-by-step debugging control that crowd testing can’t provide

- They want to run tests locally during development — not only on cloud VMs

- They need to automate regression runs on a schedule — every hour, every deploy — not on a crowd-dependent timeline

- Limited support for multiple versions and multiple browsers can be a significant drawback for teams needing broad compatibility

Additionally, Rainforest QA's reliance on crowd testers can slow down test execution, and its pricing can become expensive as test volume increases.

Rainforest QA includes features such as generative AI for auto-updating tests, parallel execution, and detailed test results, but may lack advanced reporting and analytics compared to some alternatives.

Rainforest QA Alternatives — Low-Code & Codeless Test Automation Tools

BugBug

Best for: Startups, SaaS teams, and web-first product companies that want deterministic, self-managed E2E automation — without crowd variability, VM overhead, or unpredictable usage pricing.

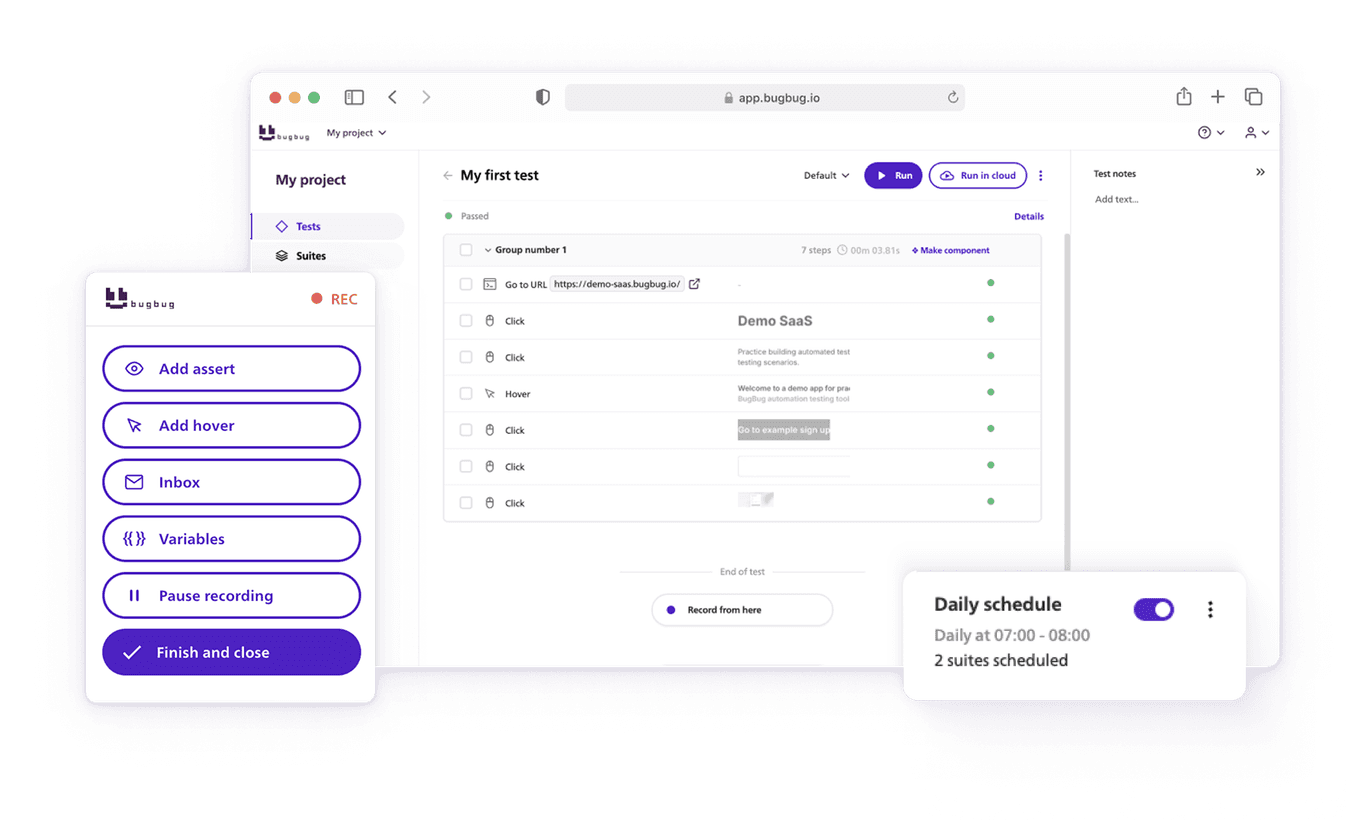

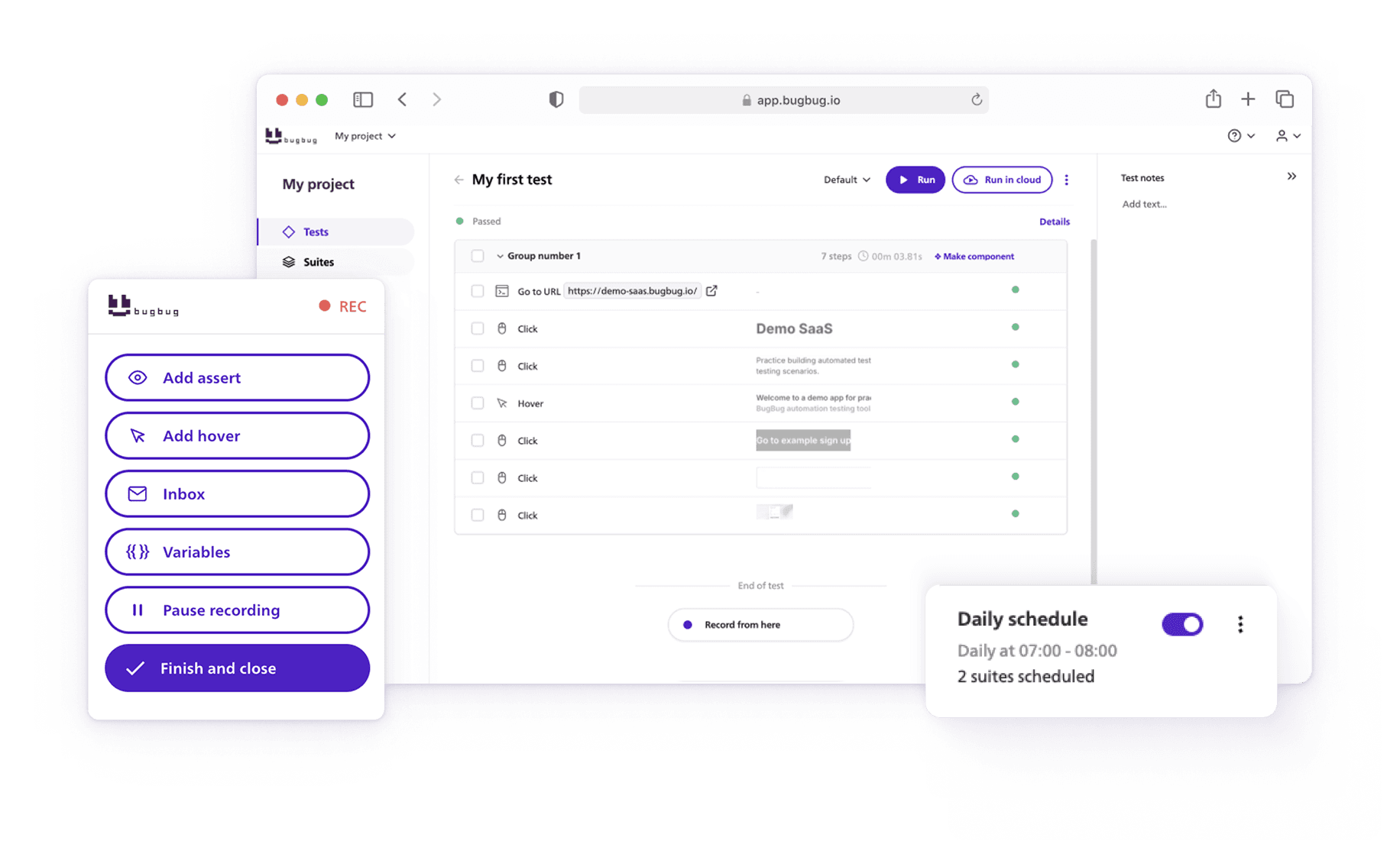

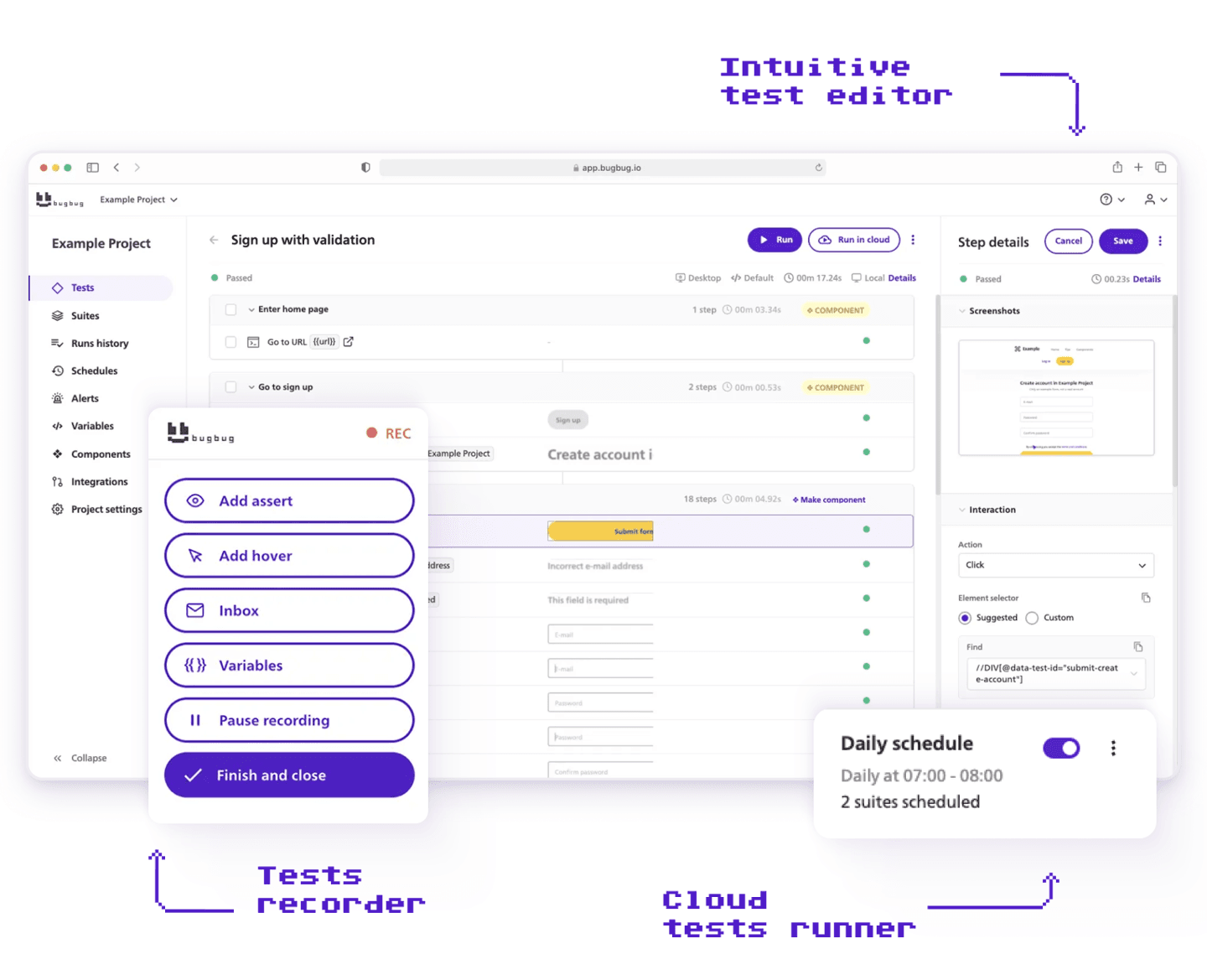

BugBug is an easy-to-use web testing tool that helps teams build reliable regression coverage for their web applications — without writing a single line of code or managing any infrastructure. Using a Chrome extension-based web test recorder, teams can interact with their app to capture user flows directly in the browser, which simplifies test creation and enables automated tests in minutes. Tests can be run locally or in the cloud on a schedule. With features like Edit & Rewind and smart waiting conditions, BugBug keeps test maintenance low even as your web app grows and changes.

Strengths:

- No-code test creation: Record clicks, inputs, and flows directly in your browser and turn them into automated web tests instantly — no framework or setup required.

- Web test recorder: Capture user flows in real time with a Chrome extension, making it easy to create tests without coding.

- Low maintenance: Edit & Rewind and smart waits reduce flaky tests and keep your web test suite healthy without ongoing manual upkeep.

- Reduces test maintenance and addresses test brittleness: BugBug’s approach minimizes flaky tests, helping teams maintain robust and reliable test suites.

- Local testing support: Run tests on your own machines during development, as well as in the cloud, for flexible testing environments.

- Unlimited execution: Run tests locally or in the cloud without run limits. Schedule suites to monitor your web app’s health continuously.

Limitations:

- Chromium/Chrome only: BugBug runs tests in Chromium-based browsers. If cross-browser coverage across Firefox or Safari is a hard requirement, you’ll need to supplement with another tool.

- No deep framework customization: Teams that need complex data-driven scripting or framework-level control beyond pragmatic JavaScript support will find dedicated frameworks like Playwright or Cypress a better fit.

BugBug vs Rainforest QA

The core difference between BugBug and Rainforest isn’t pricing or features — it’s a fundamentally different answer to what “testing” means for your team. BugBug and Rainforest QA differ significantly in their approach to test creation and maintenance: BugBug focuses on a streamlined test automation process, making it easy for anyone to create, maintain, and scale automated tests without coding.

Rainforest's approach: a human validated that this flow works today. BugBug answers: this exact sequence of steps passed or failed, deterministically, in 30 seconds.

For regression testing — catching whether a code change broke an existing flow — deterministic automation wins every time. BugBug offers more consistent test coverage and less test brittleness compared to Rainforest QA’s crowd-based approach. For exploratory testing and novel edge cases, human testers offer something automation can’t replicate. Most teams need both eventually. But for the regression and CI/CD use case that drives most automation purchases, BugBug is the faster, cheaper, and more predictable starting point.

| Feature | BugBug | Rainforest QA |

|---|---|---|

| Pricing model | Free plan + flat $ 189/month | Usage-based + crowd costs — contact for quote |

| Local test runs | Yes — included on free plan | Not available |

| Test execution | Fully automated, deterministic | Automated + human crowd testers |

| Test creation | Background recorder while you browse | Step-by-step manual creation |

| Test creation and maintenance | Codeless, easy to update, minimal upkeep | Manual, requires ongoing human input |

| Test automation process | Streamlined, scalable, integrates with CI/CD | Crowd-based, less automation control |

| Debugging | Edit & Rewind from any step | Limited debugging control |

| Scheduling | Yes — run on any interval | Crowd-dependent, not hourly |

| Browser coverage | Chromium-based | Chrome, Firefox, Edge |

| Mobile testing | Not supported | Supported |

| Unlimited users | Yes — all paid plans | User limits apply |

| Free plan | Yes | No |

| Test results & detailed test results | Comprehensive logs, video recordings, and failure explanations | Basic pass/fail with limited detail |

Choose BugBug If:

- You primarily test web applications in Chromium browsers

- You want automated, deterministic feedback on every deploy — not crowd-dependent scheduling

- You need to run tests locally during development without cloud costs

- Predictable flat pricing matters more than hybrid crowd coverage

- Your team wants to be testing in days, not weeks

Choose Rainforest QA If:

- You have zero in-house QA and want a hybrid human + automation model to start

- Rainforest QA leverages a global network of QA professionals for manual testing and exploratory coverage, supplementing automated tests with human insight

- Edge cases and exploratory coverage are as important as regression checks, and the platform's reliance on human intervention can be beneficial for these scenarios, though it may slow down feedback cycles

- Cross-device and mobile coverage from human testers is a genuine priority

- You’re comfortable paying usage-based rates for the crowd model’s breadth

When Another Alternative Fits Better Than BugBug

BugBug is built for web-only SaaS teams that want codeless automation with local execution and flat pricing. Here's when another tool on this list is the more honest recommendation.

- TestSigma — Your testing scope covers web, mobile, and API and you need all three from one platform.

- Autify — You need unified no-code coverage across web and mobile, with multi-browser support and dedicated commercial onboarding.

- LambdaTest — You already have test scripts and need scalable cross-browser execution across many environments.

- Mabl — You're an enterprise DevOps team where frequent UI changes make AI self-healing worth the cost.

- QA Wolf — You want fully managed automated testing with a zero-flake guarantee and no in-house automation capacity to build it yourself.

- Testim — You manage a large web UI test suite where interface changes cause ongoing breakage that AI self-healing would meaningfully reduce.

- Global App Testing — Mobile testing across real devices, geographic distribution of testers, or localization coverage are first-class requirements.

- Sauce Labs — Your team has existing test scripts and needs enterprise-grade cross-browser execution with analytics and error monitoring.

- Virtuoso QA — Your team specifically prefers writing tests in natural language over a visual recorder, and text-based authoring is the adoption dealbreaker.

- Cypress or Playwright — Your team has JavaScript engineers who want full framework control and no SaaS dependency at scale.

- Selenium — Multi-language support is required and you're integrating into an existing ecosystem already built on Selenium tooling.

If you’re on the fence, BugBug’s free plan is the lowest-friction way to find out if the self-managed approach fits your team. First test in under 10 minutes — no credit card.

TestSigma

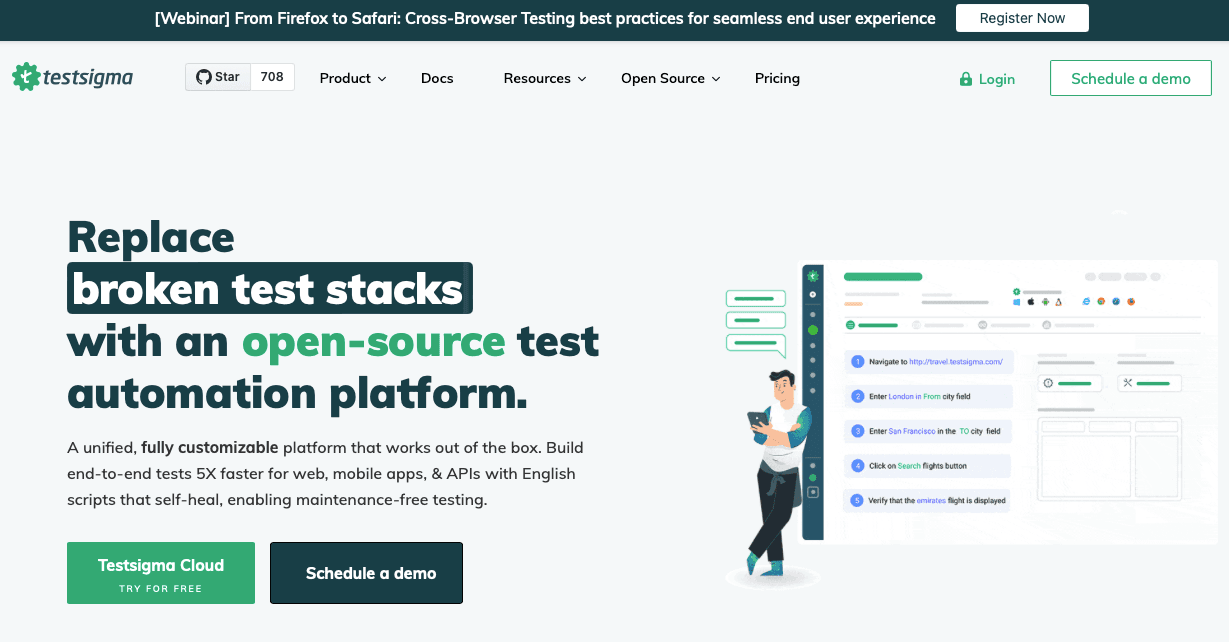

Best for: Non-technical teams needing AI-powered coverage across web, mobile, and API at scale — in a single platform.

TestSigma is a cloud-based test automation platform that lets teams create tests using natural language and a visual interface. As a no code framework, it supports both automated and manual tests, including API testing, making it a comprehensive solution for organizations seeking broad test coverage across multiple surfaces. It combines codeless authoring with AI-driven maintenance.

Strengths:

- Natural language test creation: Write test steps in plain English — no recording or scripting required. Accessible to non-technical stakeholders.

- Unified multi-platform coverage: Tests web, mobile, and API from a single platform, with cloud execution infrastructure included.

- Streamlined test automation process: Simplifies test creation and maintenance, enabling teams to efficiently scale and manage their automated testing for better software quality and faster releases.

- Visual regression testing and visual testing: Ensures UI consistency across platforms by detecting visual changes and maintaining interface integrity.

- Agentic AI features: Copilot and Atto assist with autonomous test planning, generation, and failure analysis.

Limitations:

- No free plan: Entry cost is high relative to web-only tools — harder to evaluate before committing.

- Codeless in name, often not in practice: Complex scenarios frequently require scripting despite the platform’s codeless positioning.

- Overkill for web-only teams: The multi-platform scope adds configuration overhead if your testing is purely web-based.

- Fully cloud-dependent: No local execution option.

Autify

Best for: Agile teams wanting no-code web and mobile automation with solid CI/CD integration — and no crowd-testing model.

Autify is a no-code platform designed for unified test automation of web and mobile apps. It supports web and mobile testing through a visual recorder interface, with streamlined maintenance and integrations designed for fast-moving teams.

Strengths:

- Codeless test creation: Visual recorder interface — accessible for non-technical QA and product teams.

- Web and mobile in one platform: Cover both surfaces without switching tools.

- Automated and manual tests: Supports both automated and manual tests for comprehensive QA strategies.

- Streamlined test automation process: Optimizes and scales the test automation process for better software quality and faster releases.

- Simplified test creation and maintenance: Makes test creation and maintenance easy, reducing time spent on upkeep compared to traditional frameworks.

- Comprehensive test coverage: Provides broad test coverage, including visual regression testing and visual testing for UI consistency across browsers and devices.

Limitations:

- Paid-only: No free tier to evaluate before purchasing — requires a commercial conversation to get started.

- Mobile scope adds overhead: For web-only teams, the broader platform adds complexity and cost you don’t need.

- More configuration than web-first tools: Setup takes longer than the simplest browser-extension-based recorders.

- Per-seat pricing: Cost scales with team size, which can compound quickly for growing organizations.

LambdaTest

Best for: Teams that already have test scripts and need broad cross-browser cloud execution across many environments.

LambdaTest is a cloud platform providing access to 2,000+ browser and OS combinations for cross-browser testing, real device mobile testing, and parallel execution. It enables testing across multiple browsers and multiple versions for comprehensive compatibility. LambdaTest integrates with Selenium, Playwright, and Cypress as an execution layer on top of existing test suites.

Strengths:

- Massive browser coverage: 2,000+ browser and OS combinations — the broadest execution grid available as a service.

- Real device mobile testing: Test on actual devices, not emulators, for accurate real device testing.

- Virtual machines for scalable execution: Leverages cloud-based virtual machines to run tests in parallel across different environments.

- Robust testing infrastructure: Provides a comprehensive, integrated platform for running automated tests at scale.

- Rapid test execution: Supports fast, distributed test runs to dramatically shorten test cycle times.

- Detailed test results: Offers comprehensive reporting, including logs and video recordings, to help teams analyze failures and improve their test automation process.

- Parallel execution: Run large test suites simultaneously for faster feedback cycles.

- Framework-agnostic: Works with whatever scripts your team already has — Selenium, Playwright, Cypress, Puppeteer.

Limitations:

- Execution only — not a test creation tool: LambdaTest runs your tests. You still need to write them elsewhere, in code, before you can use the platform.

- Requires coding: Non-technical team members can’t use LambdaTest to build tests — it needs an automation engineer or developer to create the scripts it runs.

- Learning curve: The platform assumes technical familiarity with testing frameworks.

- Virtual machine inconsistency: Performance on cloud VMs can vary, occasionally producing flaky results that don’t reflect actual application behavior.

Mabl

Best for: Enterprise DevOps teams running continuous testing at scale who need AI-driven test maintenance and deep CI/CD pipeline integration.

Mabl is an AI-native test automation platform for UI and API testing. It uses machine learning to automatically adapt tests when your application's interface changes — reducing the manual effort of keeping a large test suite current as your product evolves. Test creation is low-code through a visual interface, with cloud execution and detailed reporting built in.

Strengths:

- Auto-healing tests: Machine learning automatically updates broken locators when UI changes, reducing the maintenance burden on large, fast-moving test suites without manual intervention.

- UI and API testing combined: Covers both surface types from a single platform — useful for teams that need to validate front-end flows and backend endpoints together.

- Deep CI/CD integration: Native integrations with Jenkins, GitHub Actions, Azure DevOps, and CircleCI for continuous testing in complex pipelines.

- Visual regression testing: Automatically detects visual changes across runs, not just functional failures.

- Low-code test creation: Visual recorder accessible to non-developers, with the option to extend tests with custom logic where needed.

Limitations:

- Pricing: Mabl is positioned at the enterprise tier — not practical for startups or small teams with limited QA budgets.

- Steeper learning curve than simpler tools: Despite its low-code positioning, getting full value from Mabl's AI features and reporting takes meaningful onboarding investment.

- Overkill for stable applications: Auto-healing delivers ROI when your UI changes frequently. Teams with stable, well-maintained applications pay for AI infrastructure that rarely activates.

QA Wolf

Best for: Teams that want automated browser testing at scale without hiring or training in-house automation engineers — and are willing to trade direct control for a fully managed service.

QA Wolf is a fully managed test automation service built on the Playwright framework. Rather than giving your team a tool to use, QA Wolf's engineers write, run, and maintain your automated tests for you — backed by a zero-flake guarantee and a 24-hour turnaround on test maintenance. It's a fundamentally different model from self-managed platforms: you define what needs testing, and QA Wolf handles the rest.

Strengths:

- Zero-flake guarantee: QA Wolf commits to eliminating flaky tests — a meaningful promise for teams where test reliability has eroded trust in automation.

- Fully managed execution and maintenance: Your team doesn't write or maintain test scripts. QA Wolf handles creation, updates, and ongoing upkeep with 24-hour turnaround.

- Built on Playwright: Tests run in a well-supported, actively developed open-source framework — giving you a degree of portability that proprietary platforms don't.

- Fast test creation at scale: Suited to teams that need broad automated coverage quickly and don't want to build the capability in-house.

- Parallel execution: Runs tests in parallel without your team managing the infrastructure.

Limitations:

- Less direct control: In a managed service model, you don't write or directly modify individual tests. Teams that want to inspect, adjust, and own their test logic at the step level will find this limiting.

- Pricing: Fully managed services carry a service cost premium over self-managed tools — typically significantly more expensive per test than running your own automation.

- Dependency on a third party for changes: When your application changes, you're dependent on QA Wolf's 24-hour cycle to update tests rather than making the change immediately yourself.

- Less suitable for fast-iteration teams: If your development cycle is very rapid and you need test updates in minutes rather than hours, the managed turnaround model can become a bottleneck.

Testim

Best for: Enterprise web teams managing large UI test suites across products where frequent interface changes create ongoing test maintenance overhead.

Testim is an AI-powered test automation platform for web UI testing. Its core capability is self-healing: when your application's interface changes, Testim's AI-driven locators automatically adapt rather than breaking. It supports both codeless and code-based test creation, giving teams the option to mix visual authoring with custom scripting for complex scenarios.

Strengths:

- AI self-healing locators: Automatically adapts to UI changes, reducing the selector maintenance burden on large, fast-moving test suites without manual intervention.

- Codeless and code-based flexibility: Visual test creation for non-developers, with the option to extend tests with custom JavaScript for scenarios that fall outside no-code capabilities.

- Scales for large test suites: Built for organizations running hundreds or thousands of UI tests across products with active development cycles.

- Strong CI/CD integration: Designed to fit into enterprise DevOps pipelines with support for common CI tools.

Limitations:

- No free plan: Pricing starts at $450–$1,000+/month — a significant barrier for small and mid-size teams evaluating options.

- AI maintenance opacity: Self-healing locators can silently update to point at the wrong element, creating false positives where tests pass but are no longer testing what you expect.

- Overkill for stable applications: If your UI doesn't change frequently, the AI maintenance capability rarely activates — you're paying for infrastructure you don't need.

- Web-focused only: No mobile or desktop testing support.

- Complexity for simple needs: The platform's depth is more than most early-stage or web-only teams require.

Global App Testing

Best for: Teams that want the coverage benefits of crowdsourced human testing — particularly across mobile devices, geographies, and edge cases — with faster turnaround than Rainforest QA's model provides.

Global App Testing combines crowdsourced human testing with automation to deliver functional testing results across real devices and global environments. Like Rainforest, it uses human testers to validate application flows — the core differentiator is execution speed, with results delivered within 6 hours from testers distributed globally.

Strengths:

- Real device coverage: Tests run on actual Android and iOS devices, not emulators.

- Human insight on edge cases: Catches usability issues, localization problems, and unexpected behaviors that automated tools miss.

- Flexible engagement model: Can be used for exploratory testing, functional validation, or regression coverage depending on the engagement type.

Limitations:

- Not suitable for continuous automated regression testing: Human-based execution, even at 6 hours, is too slow for per-commit CI gating or hourly monitoring.

- Variability in results: Human testers interpret test steps differently — results are not fully deterministic across runs.

- Less control over individual test execution: You define what to test, but you don't control precisely how each step is executed.

When to consider Global App Testing over Rainforest QA: When you need the human testing model but want faster turnaround, broader device coverage, or geographic distribution of testers. If your goal is shifting to deterministic automated regression testing, both Global App Testing and Rainforest are the wrong category — fully automated tools will serve that need better.

Sauce Labs

Best for: Enterprise teams with existing automated test scripts that need comprehensive cross-browser execution infrastructure, test analytics, and error monitoring in one platform.

Sauce Labs delivers enterprise-grade testing infrastructure combining automated test execution, visual testing, error monitoring, and a modular test toolchain. It operates as an execution platform — your team brings the test scripts (in Selenium, Playwright, Cypress, or Appium) and Sauce Labs provides the cloud infrastructure, device coverage, and reporting layer on top. It's a more analytically rich alternative to pure execution platforms like LambdaTest or BrowserStack for teams that want insight into test health trends alongside raw coverage.

Strengths:

- Comprehensive execution infrastructure: Broad browser, OS, and real device coverage for running automated tests at scale.

- Test analytics and error analysis: Detailed reporting on test performance, flakiness trends, and failure classification — more analytical depth than most execution platforms.

- Error monitoring integration: Combines test execution data with application error monitoring for a broader view of quality.

Limitations:

- Requires existing test scripts: Sauce Labs is purely an execution platform — your team needs automated testing scripts in a supported framework before you can use it. It provides no way to create tests.

- Requires coding: Non-technical QA team members can't use Sauce Labs to build or author automated tests without a developer writing the scripts it runs.

- Expensive at scale: Among the higher-priced execution platforms — parallel session costs compound significantly for large test suites.

- Complexity for simple needs: The platform's depth is overkill for teams running small or web-only test suites without complex reporting requirements.

Virtuoso QA

Best for: Non-technical teams that prefer writing tests in natural language over using a visual recorder — and want a more modern platform roadmap than Rainforest QA offers.

Virtuoso QA is a test automation platform that lets teams create tests using natural language programming — writing test steps as plain English sentences that the platform interprets into executable automation. It's positioned as an accessible alternative for non-developers who want to contribute to the testing process without learning a visual recorder or a coding framework. Virtuoso is generally noted for being easier to set up than Rainforest QA and having a more actively developed product roadmap.

Strengths:

- Natural language test creation: Write tests in plain English — accessible to product managers, business analysts, and non-technical stakeholders who wouldn't use a visual recorder.

- Claimed maintenance reduction: Virtuoso's approach is designed to reduce the time teams spend updating tests when the application changes.

- Easier setup than Rainforest: Lower configuration overhead to get from account creation to first running test compared to Rainforest's onboarding process.

Limitations:

- Natural language ambiguity: Plain English test instructions can be interpreted in more than one way — particularly on complex pages with multiple similar elements. Precision requires careful instruction writing.

- Less debugging control: When a natural language test fails, isolating exactly which step caused the failure is harder than with a visual recorder where each step is explicitly captured.

- Smaller ecosystem: Fewer community resources, third-party integrations, and documented solutions compared to established platforms.

When You Should Consider an Open-Source Framework Instead

If your team has JavaScript engineers and wants full ownership over test architecture — no SaaS pricing, no vendor dependency — open-source frameworks are worth the investment for long-term scalability. However, these solutions require extensive coding knowledge and significant effort to maintain tests and update test scripts as your application evolves. Test maintenance and test brittleness are common challenges with open-source frameworks, often leading to flaky tests and increased overhead.

Playwright is a modern, high-speed, open-source framework by Microsoft that supports multiple languages including JavaScript, Python, Java, and .NET. It is highly reliable for cross-browser testing across Chromium, Firefox, and WebKit.

Cypress is a popular, fast, and reliable JavaScript-based end-to-end testing framework.

Test maintenance with open-source frameworks is often painful and time-consuming for teams, especially as applications grow and change frequently.

Choose Cypress or Playwright if:

- You have strong JavaScript engineering resources available for test development and maintenance

- Full scripting flexibility and custom test architecture are non-negotiable

- You're comfortable managing CI, test runners, and reporting infrastructure yourself

- Playwright specifically: Cross-browser coverage across Chromium, Firefox, and WebKit from a single API is a genuine requirement

Choose Selenium if:

- You need multi-language support (Java, Python, C#, Ruby)

- You're integrating into a large existing automation ecosystem built on Selenium tooling

- Maximum browser flexibility and a mature, widely-supported community are the priority

Open-source frameworks offer maximum control — but require full ownership. Your team manages the infrastructure, maintains the framework, and writes every test in code. For non-technical QA teams or organizations without dedicated automation engineers, that overhead often negates the flexibility benefit. Start here only if coding resources are genuinely available for the long term.

Want CI/CD integration and local execution — without owning the infrastructure?

Test easier than ever with BugBug test recorder. Faster than coding. Free forever.

Get started

Rainforest QA Alternatives — Final Thoughts

Rainforest QA solves a real problem: getting testing coverage when you have no in-house QA expertise and no time to build automation from scratch. When evaluating Rainforest QA alternatives, it's important to consider how each platform supports your test automation process and enables efficient test coverage. The crowd model is genuinely useful for teams at that early stage — especially when exploratory testing and edge case discovery matter as much as regression coverage.

However, many QA engineers and QA professionals are rethinking their tooling to find solutions that provide reliable test results and detailed test results without high costs. As teams mature, the trade-offs compound. Crowd testing is slower, more variable, and more expensive per test run than self-managed automation. For most SaaS teams that have moved past the “we have no QA at all” stage, deterministic automated regression testing is a better investment than paying for a human to click through your flows.

The question to answer before choosing any alternative isn’t “which tool is best” — it’s: do you need a human to validate your flows, or do you need automation to catch regressions before they reach production? Choosing the right test automation tool is essential for optimizing QA processes, balancing your budget, and ensuring comprehensive testing.

Happy (automated) testing!