- From a Creation Bottleneck to a Validation Bottleneck

- Fast Code Doesn’t Mean Better Code

- The Collapse of Determinism Changes Testing Forever

- Why “Vibe Checks” Can’t Be Automated

- QA as the Guardian of Ethics, Bias, and Liability

- What This Means for QA Teams in Practice

- Conclusion: QA Owns the “Should We?”

- Software Testing in the Age of AI: From Execution to Validation

- BugBug: A Practical Tool for Sustainable Test Automation

For the last couple of years, there’s been a recurring prediction in tech conversations: QA will be the first role AI replaces.

The argument sounds reasonable on the surface. Testing is already heavily automated. AI can generate test cases, write scripts, and even simulate user flows. If software creation is becoming cheaper and faster, surely the people who “just test” it are next on the chopping block.

However, AI in Quality Assurance (QA) refers to the integration of artificial intelligence and machine learning into quality assurance workflows, fundamentally changing how testing is approached.

But that framing misunderstands what’s actually changing.

AI isn’t eliminating the need for quality assurance. It’s shifting the industry from a creation bottleneck to a validation bottleneck. And that shift makes QA more critical—not less.

As AI accelerates software delivery, the real scarcity is no longer code. It’s trust.

Check also:

From a Creation Bottleneck to a Validation Bottleneck

For most of software history, writing code was expensive. Engineering time was the constraint. QA existed downstream of that constraint: once code was written, it needed to be verified.

AI flips that equation.

With copilots, agents, and “vibe coding,” teams can generate features, services, and integrations faster than humans can realistically review them. The problem is no longer “can we build this?” but “should we trust what we just built?”

Every line of AI-generated code that looks plausible but hasn’t been deeply validated increases risk:

- misunderstood business logic

- subtle security flaws

- incorrect assumptions baked in at scale

- edge cases no one explicitly reasoned about

AI in QA is not about replacing manual testers or human testers, but about transforming the QA process by automating routine tasks and reducing manual effort. By automating these routine tasks, QA teams can focus on more complex and strategic assignments, improving efficiency and reducing bottlenecks.

As code becomes cheaper, mistakes become more expensive. And that pushes validation—not generation—into the critical path.

Fast Code Doesn’t Mean Better Code

One of the most persistent myths around AI is that it improves code quality by default.

In reality, AI improves throughput, not judgment.

Generative models work probabilistically. They predict what looks right based on patterns in existing code. They don’t understand your system’s history, your constraints, your regulatory exposure, or why a certain workaround exists in a legacy flow.

This creates a dangerous gap: AI-generated code often passes superficial checks while embedding deeper issues:

- logic that’s “mostly correct” but wrong in edge conditions

- dependencies that interact badly under load

- security assumptions copied from contexts that don’t apply

Unit tests might pass. Happy paths look fine. The problems emerge later—often in production—when real users do things no prompt anticipated.

This is where QA stops being about checking outputs and starts being about challenging assumptions.

The Collapse of Determinism Changes Testing Forever

Traditional software testing was built on determinism.

If a user does A, the system should do B. You could write a test, automate it, and rely on the same outcome every time. Most testing tools and frameworks still assume this model.

AI breaks it.

Modern applications increasingly embed non-deterministic components: LLMs, recommendation engines, adaptive systems. The same input can produce multiple valid outputs. Sometimes that’s the feature.

Now ask a simple testing question: How do you assert correctness when behavior is qualitative?

You’re no longer testing exact results. You’re testing:

- boundaries

- failure modes

- tone

- bias

- safety

- user perception

This shifts QA from deterministic verification to risk-based evaluation. And that kind of evaluation is inherently human.

AI can generate tests. It cannot meaningfully decide whether something feels wrong, sounds manipulative, or creates user distrust.

Why “Vibe Checks” Can’t Be Automated

AI excels at metrics: speed, throughput, conversion tracking, latency. But quality—especially user-facing quality—is not purely measurable.

A checkout flow can be “functionally correct” and still feel broken. A chatbot can be “accurate” and still feel creepy. An onboarding flow can technically work while quietly driving users away.

This is where QA becomes the designated human in the system.

A good QA doesn’t just follow paths. They intentionally misuse the product. They notice friction. They question wording. They sense when something technically correct undermines trust.

That kind of judgment isn’t an edge case—it’s the difference between software that works and software people actually want to use.

The importance of human expertise in the QA process remains critical, especially for complex or edge cases where human judgment is irreplaceable.

As AI lowers the barrier to shipping products, experience becomes the differentiator. And experience cannot be validated by models alone.

QA as the Guardian of Ethics, Bias, and Liability

There’s another uncomfortable reality AI hype often ignores: responsibility doesn’t disappear.

When AI-generated code leaks data, introduces bias, or causes harm, the accountability lands on humans—on companies, teams, and leadership. Not on the model.

This pushes QA into an even more strategic role: AI auditor.

Modern QA increasingly involves:

- probing systems for biased behavior

- stress-testing prompts and inputs for abuse

- checking how AI responds under adversarial conditions

- validating that safeguards actually work

This requires cultural context, ethical reasoning, and malicious curiosity. It requires someone who actively tries to break the system in ways no training dataset anticipated.

With regulations like the EU AI Act emerging, documented human validation is moving from “best practice” to legal necessity. QA’s sign-off becomes a form of risk ownership, not just a testing checkbox.

What This Means for QA Teams in Practice

QA roles won’t disappear—but they will evolve.

The future QA isn’t defined by how many test cases they execute. It’s defined by how well they:

- understand system intent

- identify risk introduced by automation

- design meaningful validation strategies

- keep test suites maintainable as complexity explodes

Notably, 97% of companies noted an increase in QA productivity after implementing AI into processes, and automating routine tasks helps prevent QA engineers' burnout by reducing repetitive manual effort.

This is also why structured test automation still matters, even in an AI-driven world.

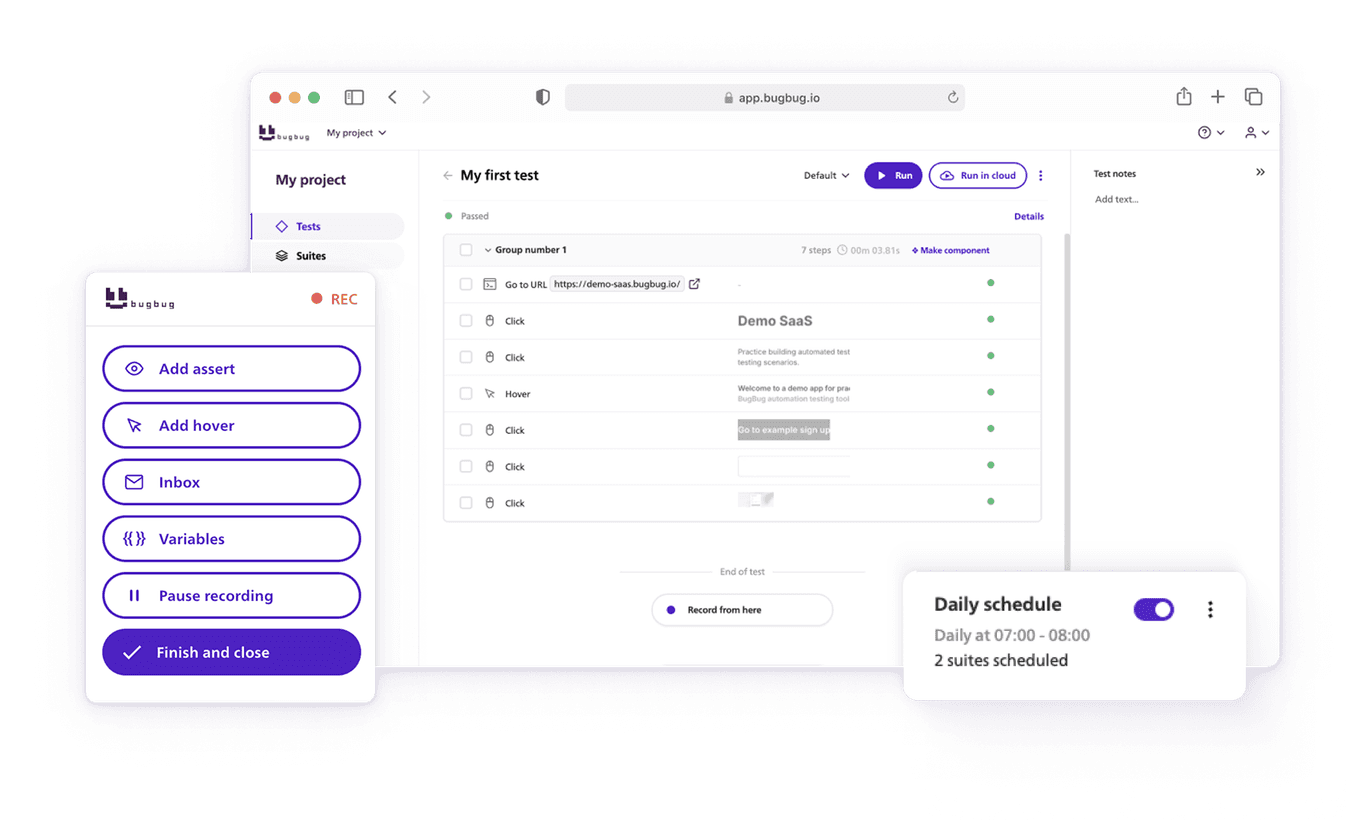

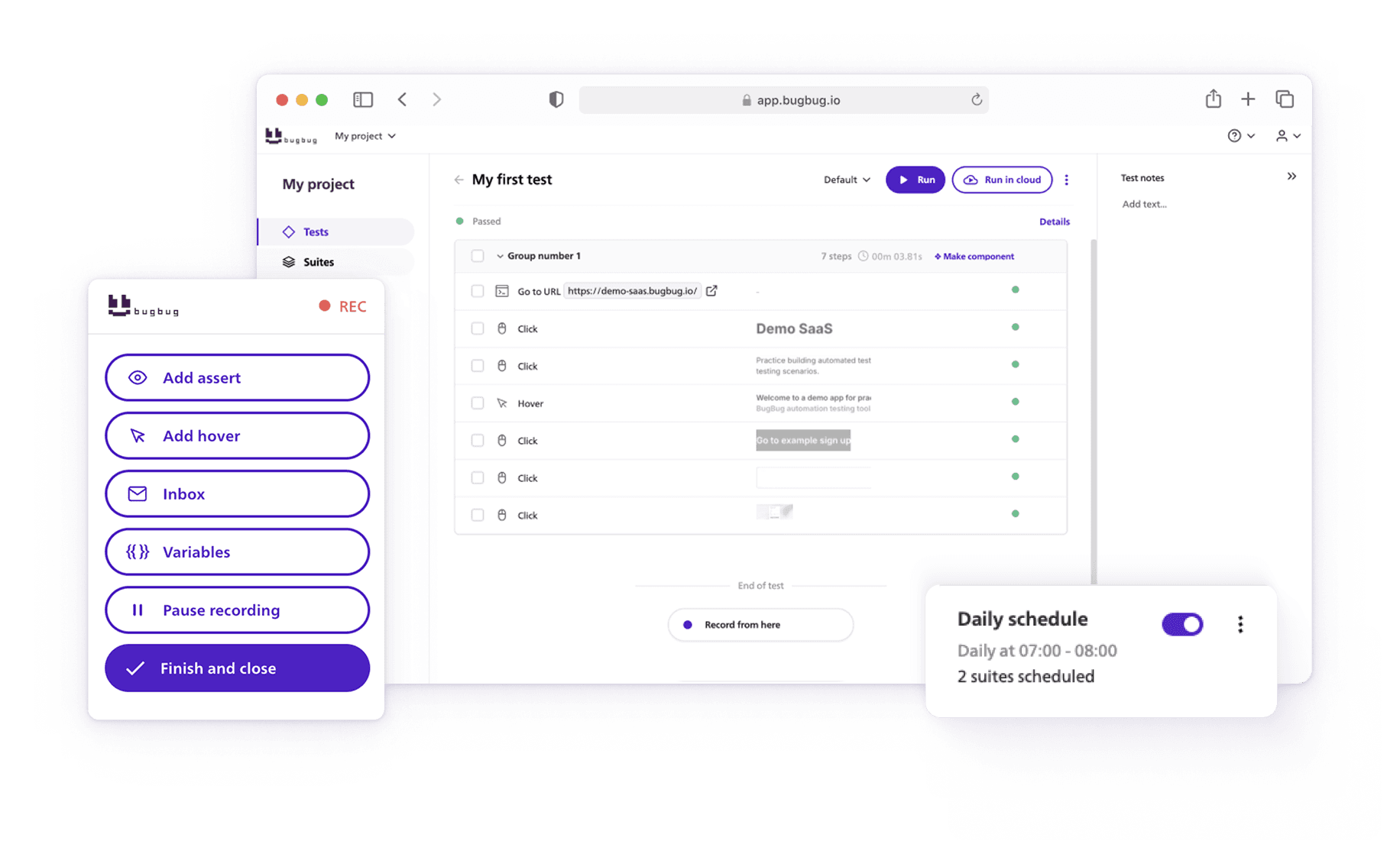

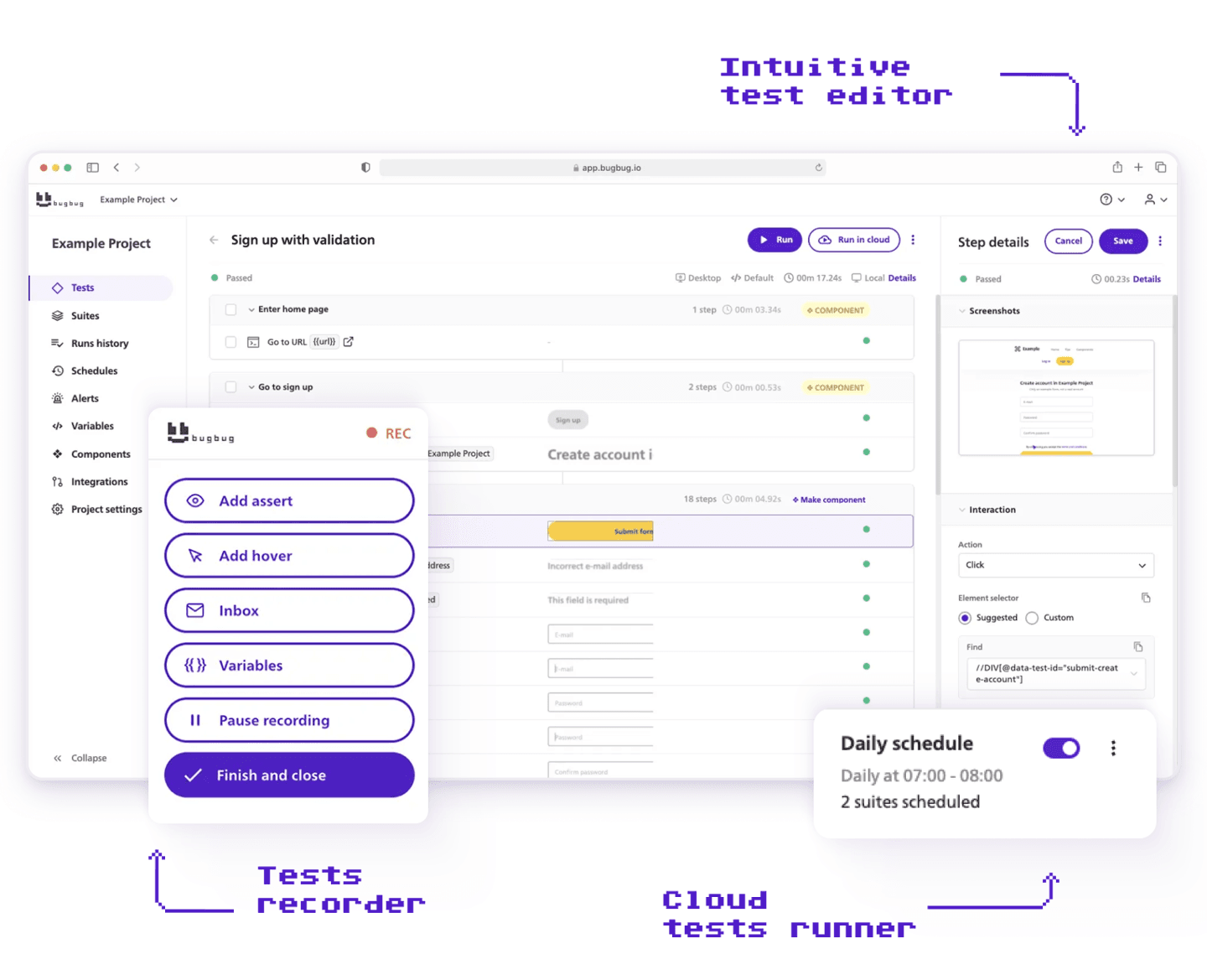

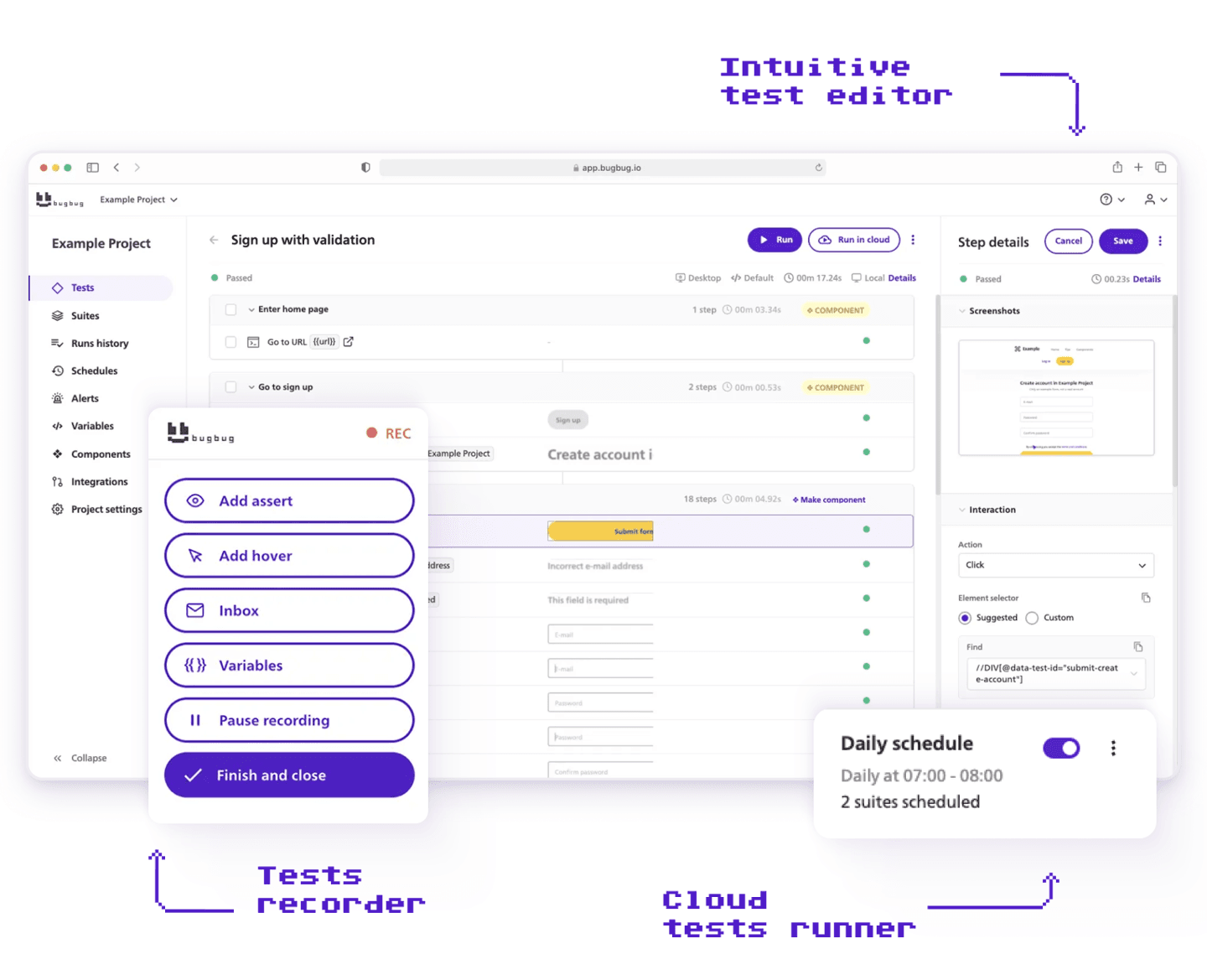

Tools like BugBug deliberately focus on readable, step-based test flows instead of opaque, fully AI-generated scripts. When AI helps generate steps or speed up setup, that’s valuable—but the final test logic remains understandable and controllable by humans.

That matters because in a validation bottleneck, clarity beats cleverness.

Traditional testing methods heavily rely on manual effort, which can lead to human errors and inefficiencies. In contrast, AI-driven approaches automate routine tasks, improve test coverage by analyzing code for untested areas, and help QA teams focus on higher-value activities.

As QA evolves, it's important to recognize that manual testing processes often create bottlenecks in software delivery. AI-driven tools can help alleviate these by automating repetitive and routine tasks, allowing teams to deliver quality software faster and with greater confidence.

Conclusion: QA Owns the “Should We?”

AI is rapidly commoditizing the how of software.

The value is shifting to the people who own the why and the should we.

QA was never just about clicking buttons or executing scripts. At its best, it has always been about skepticism, system thinking, and protecting users from unintended consequences. AI doesn’t eliminate that need—it amplifies it.

As software becomes easier to create, deploying it responsibly becomes harder.

That’s why QA won’t be the first job to disappear in the age of AI.

It will be one of the last lines of defense.

And in a world of infinite generation, that might be the most valuable role of all.

Software Testing in the Age of AI: From Execution to Validation

Software testing has never been just about finding bugs—but AI makes that clearer than ever.

As AI accelerates feature development, the volume of change increases while confidence in correctness decreases. Code is generated faster than teams can meaningfully review it, and traditional testing models—built around deterministic behavior—start to crack under the weight of probabilistic systems, rapid iteration, and opaque logic. AI in software and AI technologies are transforming software development and the testing process, enabling more efficient test generation, test execution, and test maintenance.

In this environment, testing shifts from execution to validation.

QA teams are no longer simply verifying that “if A happens, then B occurs.” They are validating that:

- behavior aligns with intent

- edge cases haven’t been silently introduced

- user experience still makes sense

- automation failures are real failures, not test noise

AI-driven tools and AI powered tools can improve test coverage by analyzing code for gaps and generating missing tests, directly addressing the challenge of insufficient test coverage in complex applications.

This raises the bar for testing tools. Automation that merely runs fast is no longer enough. Tests must be readable, maintainable, and trustworthy, especially when they are validating software influenced—or partially generated—by AI. AI can significantly reduce the time spent on test maintenance by automatically updating test scripts when applications change.

Flaky tests, hidden logic, and overly clever automation actively harm teams under these conditions. When releases accelerate, QA cannot afford tools that obscure what is being tested or why. Many QA teams struggle with tight deadlines for release cycles, and implementing AI and AI powered testing tools can help address these challenges.

Modern software testing needs tools that help humans stay in control: clear test flows, predictable behavior, and fast feedback without sacrificing understanding. AI tools and QA tools can help prioritize test cases based on historical defect data, code changes, and code complexity metrics—Google uses AI algorithms to prioritize test cases for high-risk code fragments, while Microsoft employs predictive AI tools to forecast which code changes carry the highest risk of defects.

When discussing the shift from execution to validation, it's important to note that AI can automate regression testing, allowing hundreds of tests to be executed simultaneously without human intervention, which greatly improves the efficiency of the QA process.

AI models and machine learning models enable predictive defect detection by analyzing code patterns and historical defect data, helping teams focus testing efforts on high-risk areas and optimize their testing strategies.

Integrating AI into QA workflows and CI/CD pipelines can help teams reduce maintenance time, increase efficiency, and enhance the speed and reliability of software releases.

BugBug: A Practical Tool for Sustainable Test Automation

BugBug is built around a simple idea: test automation should reduce risk, not add another layer of uncertainty.

BugBug focuses on solving the real problems QA teams face day to day—flaky tests, slow maintenance, and tools that are harder to understand than the systems they test.

At its core, BugBug is a web application testing tool designed to keep automation accessible, explicit, and stable.

The global AI in software testing market is projected to grow at a CAGR of over 18.7% between 2024 and 2033, and 43% of companies reported significant improvements in test coverage after implementing AI technologies. QA teams can start implementing AI by identifying areas where AI can have the greatest immediate impact and then building from there, taking practical steps to integrate AI into their testing process.

Clear, Step-Based Test Creation

BugBug’s recorder captures real user interactions and turns them into readable test steps. This makes test creation fast for non-technical users, while keeping tests understandable and editable for more experienced testers and developers.

BugBug’s approach to test creation reduces the need for extensive coding skills, making automated testing accessible to a wider range of users. With advances in natural language processing (NLP), testers can now describe test scenarios in plain language, and AI can translate these descriptions into automated test scripts. Generative AI, such as large language models, can further assist by generating test code and test scripts directly from user-provided descriptions.

Tests don’t become black boxes after recording. They remain transparent and easy to adjust as the application evolves.

Stability Without “AI Magic”: The Role of Manual Testing

Instead of relying on opaque self-healing claims, BugBug tackles flakiness directly:

- automatic selector validation highlights broken selectors instead of silently masking failures

- active waiting conditions ensure elements are ready before interactions occur

Additionally, visual testing powered by advanced machine learning algorithms can help detect UI inconsistencies and reduce false positives in automated testing.

These features reduce false negatives while keeping test behavior predictable and debuggable.

Built for Team Workflows

BugBug supports both local and cloud execution, allowing teams to run tests where it makes sense and scale execution when needed. Collaboration features and CI/CD integrations (GitHub, GitLab, Bitbucket, Jenkins) make automated testing part of the delivery pipeline rather than a separate activity.

AI can assist in generating synthetic test data that complies with privacy regulations, supporting effective testing in various testing contexts. Additionally, AI helps create comprehensive test scenarios and monitor user behavior, enabling teams to improve test coverage and testing outcomes.

Automation That Respects Human Judgment

BugBug deliberately avoids hiding logic behind probabilistic systems. While it automates repetitive work, the final source of truth remains the test itself—something a human can read, reason about, and trust.

By automating routine tasks and repetitive tasks with AI powered testing tools, QA leaders can address testing bottlenecks such as test data preparation and environment setup, significantly improving overall efficiency.

That design choice matters in an AI-driven development landscape. When application behavior is increasingly non-deterministic, testing tools must become more—not less—transparent. AI systems can reduce the need for human intervention in routine testing, allowing QA experts to focus on more strategic and complex issues.

BugBug doesn’t try to replace QA expertise. It exists to support it, making test automation faster to create, easier to maintain, and safer to rely on as software complexity continues to grow.

Happy (automated) testing!